Robots

Important: This article only applies to customers who have purchased DIY-Website Builder after January 2020.

The robots.txt file contains directives for search engines like Google and Yahoo, specifying which website pages to include or skip in their search results. By editing this file, you can exert more control over how search engines crawl and index your site's content. By default, the robots.txt file allows search engines to crawl all pages of your website.

Please Note: Pages hidden from the search engines in the Site Editor will remain hidden with any robots.txt file.

Editing Robots.txt File

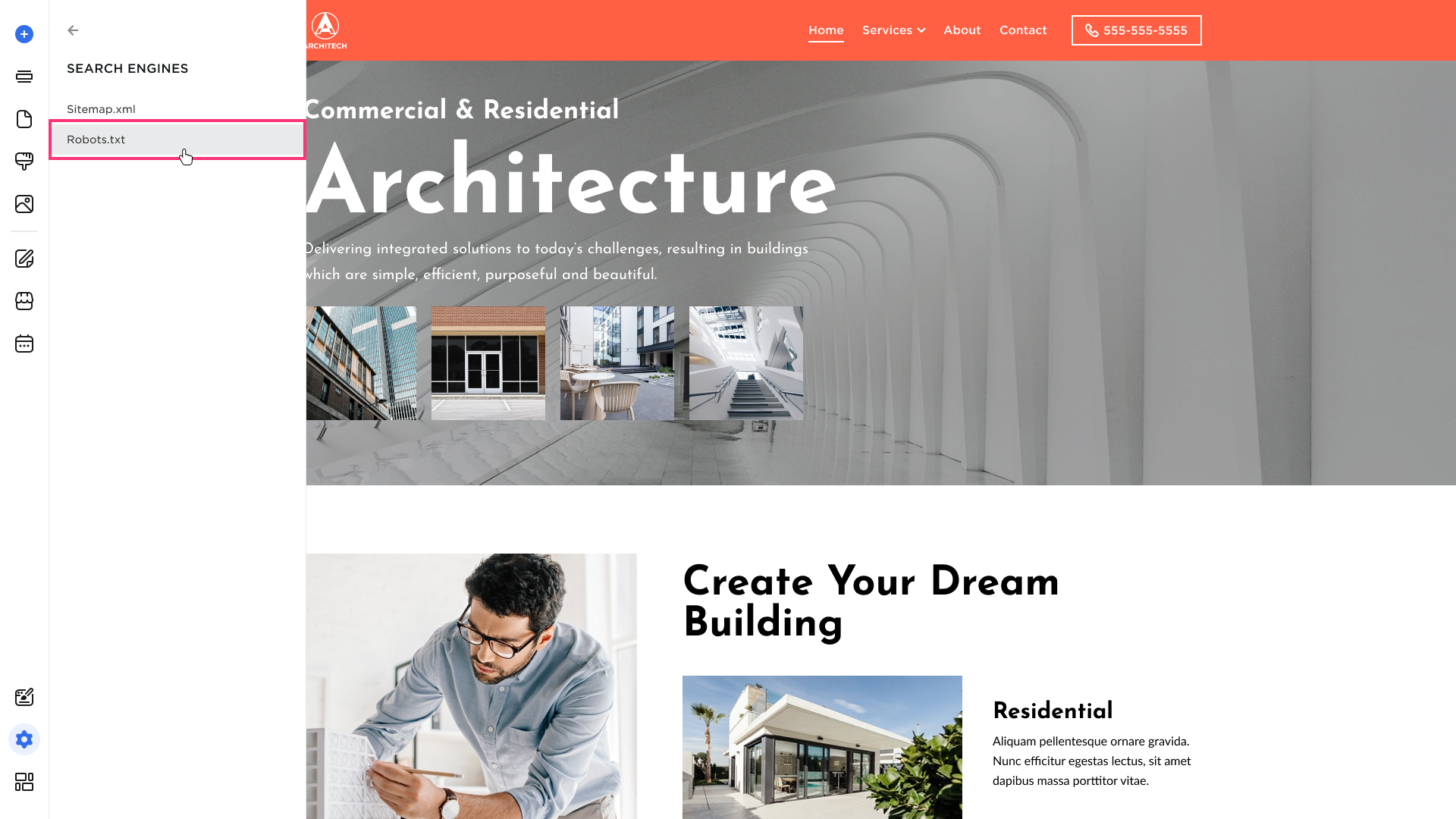

- Hover over the left sidebar of the Site Editor and select Settings:

- Navigate to Search Engines > Robots.txt:

- To be able to edit the Robots.txt file, click the disable auto-generation, then edit the file by clicking the Edit icon on the right:

- Click Save.

Resetting the Robots.txt File

You can restore the robots.txt file to its default settings in two ways in the Site Editor:

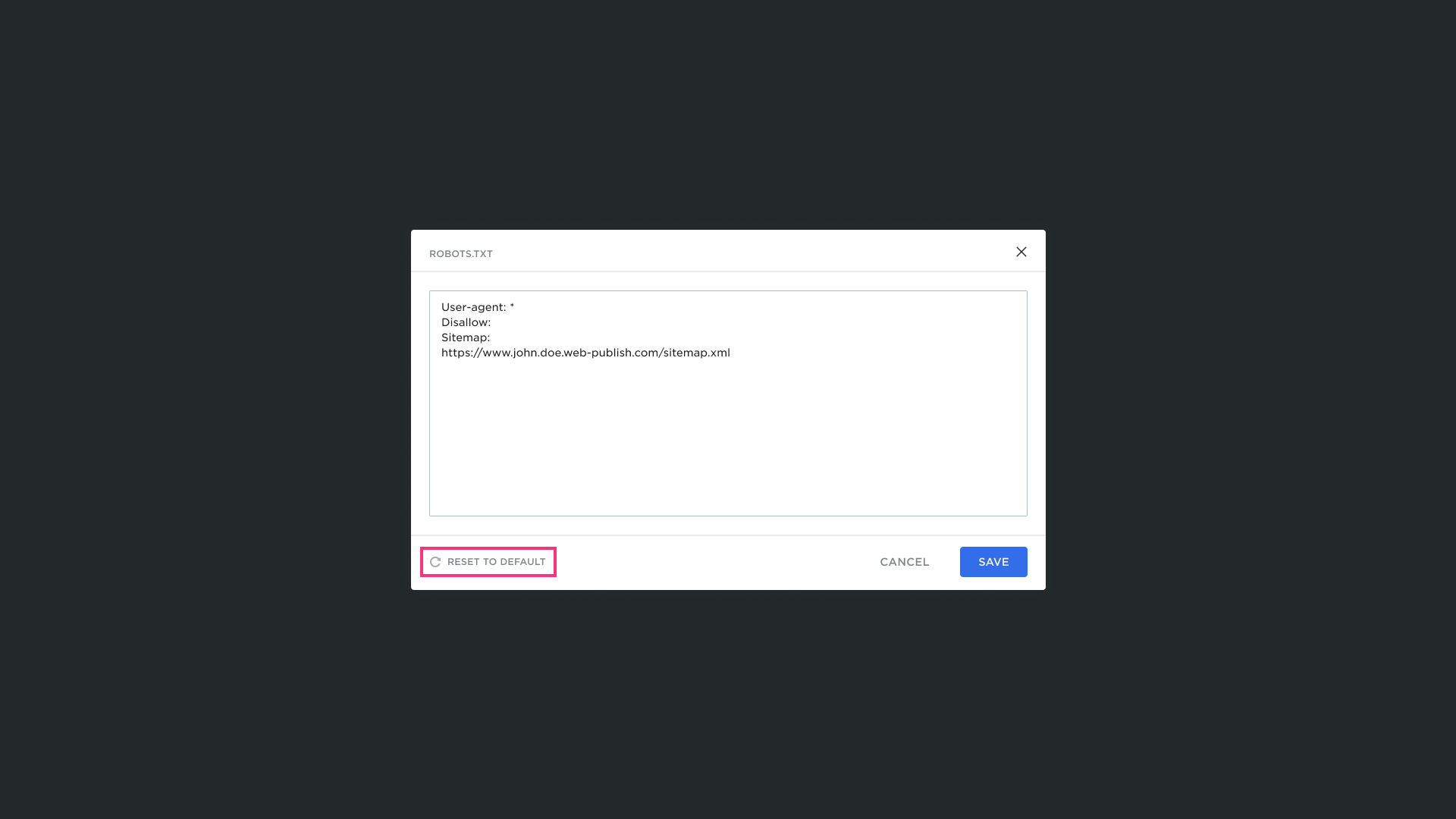

- Manual Reset: Click the Reset to Default button while in the Robots.txt editing mode:

- Enable Auto-Generation: Click the Enable auto-generation button and confirm by clicking Yes:

Please Note:

- All manual changes made to the Robots file are automatically erased if you turn on auto-generation.

- If auto-generation of the Robots file is enabled, the Robots file can't be edited.

.png)